The ability of data centre operators and providers to adapt to the latest market trends and innovations is essential in maintaining a commercial ‘edge’. Ironically perhaps one of the big market trends is edge computing.

Edge computing is not a new technology, having evolved from the content distributed networks of the 1990’s designed to serve video and file transferring services, but it has only had the real benefits realised in the last few years. This is due to changes in adjacent technologies such as conventional data centres, national and international data infrastructure and changes in viewing, gaming and personal data habits.

There is no current all-encompassing definition of “edge” computing, but there are commonalities in all definitions which is primarily the decentralisation of data processing from traditional data centres and cloud repositories to local, or edge facilities. To understand why this is occurring, the relationship between data volume generation and transfer bandwidth needs examining.

Currently, “around 10% of enterprise-generated data is created and processed outside a traditional centralized data center or cloud. By 2025, Gartner predicts this figure will reach 75%.” [1]

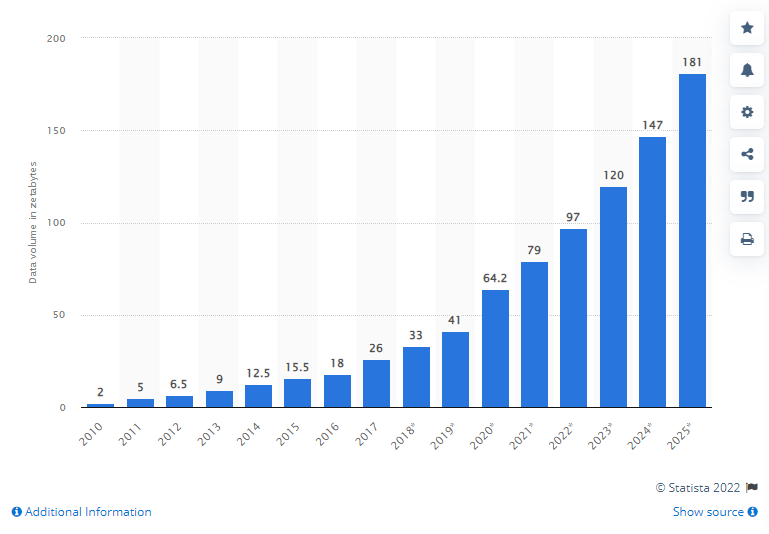

Figure 1 shows the amount of data generated globally, an almost exponential rise year on year which when the growth is extrapolated would be approximately 181 zetabytes (ZB) generated by the end of 2025. Of this, 90ZB will be created by IoT (Internet of Things) devices.

The development of IoT devices which generate significant volumes of real time data (from status and location to actionable), across multiple streams and the increasing volume of enterprise-generated data has outstripped the development of network infrastructure, creating issues with volume transfer and network latency when processing centrally. Fundamentally, this is data where the importance of real-time, or near real time processing is of greater importance than long term storage or capture.

Preparing for widespread deployment of edge computing

In additional to professional and industry use, the leisure and personal generation and processing of data and information is driving a requirement for localised processing power and edge nodes. Traditionally residential developments may have had centralised facilities for electrical services (substation distribution) and heating (district heating schemes).

The increase in video security, doorbells and smart home devices and requirement for resilient infrastructure networks to support these, even before considering video gaming, streaming and working from home services, is an issue that edge computing is well placed to resolve. An edge computing facility included within larger scale residential developments can reduce the required bandwidth into the network, as well as reducing the latency for the critical applications detailed above. A potential use could be that when a new show is released on a streaming platform that is expected to have high viewership, this can be distributed to residential edge computing where this can be accessed locally, instead of multiple connections directly to the central streaming servers, reducing latency and bandwidth use.

Edge computing (and to a lesser extent, end user nodes) can offer solutions to these issues. The scale of this indiscriminate data capture is perhaps clearest in the oil industry, where only 1% of IoT device data generated (each rig may have 30,000 sensors) is actually processed into meaningful insights or a useful form.

If we accept that data volume generation will only increase and become more important across professional and personal sectors, while understanding that this will outstrip the ability to deliver main infrastructure capacity, there is a need to assess in greater detail the quality and quantity of data that is distributed through the network and how this is processed and stored.

Some data has both immediate uses, such as identifying if a sensor or device has failed and needs maintenance while offering longer term insights, such as identifying failure rates, patterns or % of time out of operation.

What the future looks like

We posit therefore that the benefits of edge computing are best realised as part of a holistic data processing and storage strategy which includes the identification of importance and temporality at or close to source with short term low latency applications and requirements being managed through edge computing, processing the data to reduce volume and filter out the more important data. This is then transferred to conventional offsite data halls where it can be stored at reduced cost /TB for longer term to drive insights and for storage purposes, or to interface elsewhere on the network.

The wider adoption and availability of edge computing does present challenges however compared to traditional data centres. For example, the reduced size decreases electrical and thermal efficiency, especially of plant due to efficiencies of scale. Additionally, with de-centralisation comes increased real estate costs which will be passed to the end user if located in conurbations or residential areas. The sector as a whole is also being challenged on renewable energy. There is an opportunity here if considered at the right stage to integrate the electrical requirements into district generation and also to use the waste heat produced to heat adjacent buildings or dwellings.

How BCS are delivering the change to clients

With the electrical capacity for building additional large or hyperscale data centres in conurbations like Dublin and London limited, the redevelopment of existing sites and a drive to be more energy efficient coupled with redevelopment of existing smaller sites will be a focus for the coming years and the volume of these projects has increased significantly in the last 18 months.

One of the trends that we at BCS are seeing is that the first generation data centres, which are typically in city centre locations and don’t have fully resilient infrastructures as well as being low density, are now at the end of their lives, having being operational for circa 20 years. The majority of these sites are smaller and in adapted buildings which may have lower floor to ceiling heights and permissible loads.

We are now working with clients to understand how best these sites can be redeveloped. Generally this is either into low – medium density co-located data centre capacity where proximity to customers operations is an important factor. The upgrades to infrastructure are also critical and having multiple sites to enable effective consolidation is one solution we are in the process of deploying with clients.

At BCS we pride ourselves on bringing novel solutions to problems and we are bringing our experience working in live data centres, expanding capacity to an exciting new development in East London which will involve a full infrastructure upgrade for the existing data centre (UPS and generators) while the data centre remains live. The new infrastructure will then also have capacity to support a sizeable new development (c50MW) following demolition of an existing structure elsewhere on site.

Conclusion

Overall, the increased adoption of edge computing and supporting data centre space will pose a challenge to clients moving away from traditional or hyperscale data centres to co-located spaces in complex city or live environments as part of a broader delivery strategy. The BCS team is expanding and evolving and we all look forward to meeting these challenges and support clients in their decision making, bringing insight and best practice to their projects.

Bibliography

[1] Gartner Inc, “What Edge Computing Means for Infrastructure and Operations Leaders,” 03 October 2018. [Online]. Available: https://www.gartner.com/smarterwithgartner/what-edge-computing-means-for-infrastructure-and-operations-leaders. [Accessed 25 February 2022].

[2] Statista, "Volume of data/information created, captured, copied, and consumed worldwide from 2010 to 2025," Statista, 2022. [Online]. Available: https://www.statista.com/statistics/871513/worldwide-data-created/. [Accessed 25 February 2022].